Since the rise of deep learning, several puzzling phenomena in neural networks remain poorly understood. One of the most intriguing is the emergence of abilities that these networks weren’t explicitly trained for. For instance, ChatGPT can translate languages despite being designed solely for next-word prediction, while visual language models can classify images even though they were trained merely to associate images with text descriptions.

An interesting theory that relates to this phenomenon is the idea that representations learned by large models may be converging to a shared statistical model of reality enabling them to generalize better across different tasks and scenarios. This concept is explored in the paper titled “The Platonic Representation Hypothesis.”

The Platonic Representation Hypothesis proposes that large models, when trained on different types of data, gradually converge toward a shared representation of the world. This shared representation reflects the reality we perceive through various senses, and as models grow in size and are exposed to more diverse data and tasks, their representations become more aligned with each other and with the real world.

Plato’s Cave: A Metaphor for AI Learning

The Platonic Representation Hypothesis draws inspiration from Plato’s Allegory of the Cave, a metaphor for the gap between perceived reality and true reality. In the allegory, prisoners in a cave see only shadows of real objects on the wall, mistaking them for the full reality. These shadows are just partial reflections of a more complex world that exists beyond their perception.

Similarly, in the Platonic Representation Hypothesis, AI models are like these prisoners. The data they are trained on—images, text, sounds—are analogous to the shadows, offering incomplete glimpses of a more intricate reality. As these models grow in complexity, they begin to capture more accurate and nuanced representations of the world beyond their immediate training data.

This idea connects to convergent realism from the philosophy of science, which suggests that scientific models gradually converge toward the truth over time. In the same way, the Platonic Representation Hypothesis argues that neural networks, even when trained on different tasks and data modalities, are converging toward a shared, more accurate statistical model of the underlying reality. This convergence signifies a growing alignment toward a common understanding of the world, transcending the specific datasets or tasks the models were initially trained on.

Why Do AI Representations Converge?

As AI models continue to evolve, there are a couple of reasons why they may be converging toward a shared understanding of the world: data and task diversity, model scale and simplicity bias.

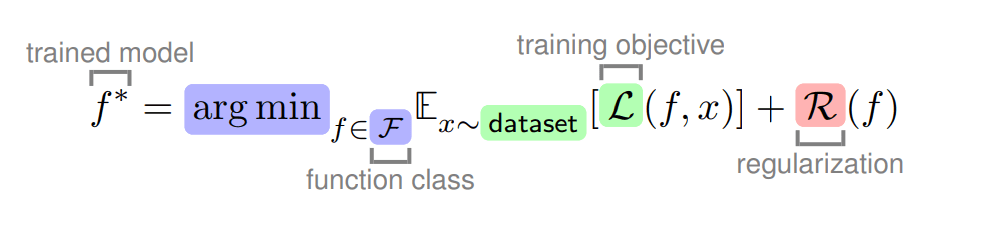

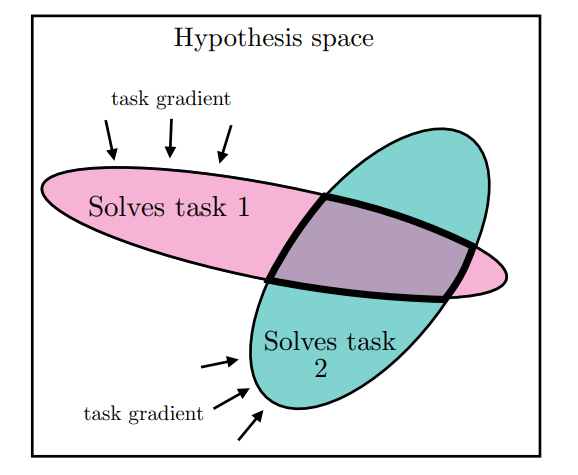

Task diversity and data growth – As the amount of data and the diversity of tasks (learning objectives) increase, the number of possible representations that can accurately describe both the data and the tasks decreases. The more tasks a model is trained to solve, the fewer ways it can represent the world while still satisfying all the requirements. This idea is illustrated in the figure below, where essentially as models become more general and capable of handling multiple tasks, the range of valid solutions narrows.

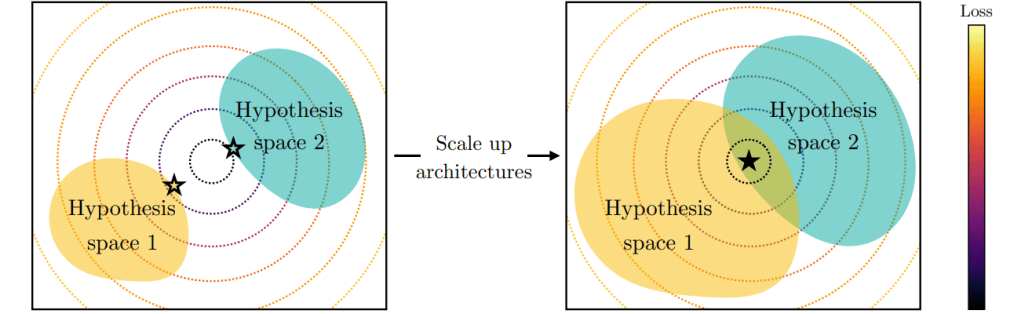

Scale of models – The second key factor is model scale. Regardless of architectural differences, larger models have a higher likelihood of finding similar solutions. As a model’s capacity increases, so does its ability to discover optimal solutions. These optimal solutions tend to be shared across large models, even if they have different architectures or are trained for different objectives.

Simplicity and Model Biases – The final hypothesis concerns model biases. Deep architectures tend to follow Occam’s razor, favoring the simplest solution that fits the data. In other words, larger models are more likely to find simpler solutions, which reduces the range of possible representations and points toward a shared understanding. This effect can also be reinforced through techniques enforcing regularization.

Implication of Converging Representations

There are several key implications of models converging toward a shared representation. One is the idea that scale might be all you need—larger models could inherently perform better. However, scaling efficiently is difficult and may not always be practical, as the available data will eventually reach a saturation point.

Another important consideration is that training models across multiple modalities (e.g., text, images, sound) should, in theory, improve performance in each modality. By reducing the range of possible solutions, the model forms a simpler and more effective representation. This also enhances the transferability of knowledge across different data types.

Additionally, as models converge toward more accurate representations of reality, they may reduce the occurrence of hallucinations—a common issue in large models where they produce false or nonsensical information.

Caveats to the Hypothesis

While the Platonic Representation Hypothesis provides an exciting perspective on AI, it’s not without its challenges. The real world is full of randomness and complexity, which may not always be captured by simple representations. Additionally, the hypothesis assumes a perfect alignment between the world and the data that models are trained on, but different modalities (like images and text) often carry different kinds of information. For example, an image can convey complex visual information that may be difficult to fully capture in a text description, and vice versa.

Leave a comment